Privacy is the ability for a person to understand when, how, and to what extent their personal information gets shared with others. Privacy applied to data is defined by Wikipedia as “the relationship between the collection and dissemination of data, technology, the public expectation of privacy, and the legal and political issues surrounding them.” While this definition describes what data privacy is, it does a poor job of illuminating the importance of its role in our lives. A quote by columnist Peggy Noonan initially written in the Wall Street Journal in 2013 helps to drive home the emotion that the topic should invoke. Noonan wrote that

“Privacy is connected to personhood. It has to do with intimate things — the innards of your head and heart, the workings of your mind — and the boundary between those things and the world outside.”

In many jurisdictions, Privacy is a right. In the U.S. the right of Privacy is a legal concept in both the law of torts and constitutional law. Internationally the right to Privacy has been adopted under Article 12 of the Universal Declaration of Human Rights (UDHR).

These laws and declarations set a precedent; however, the ambiguity in how data privacy is defined and the rapid pace that technology and communications have evolved have opened the door for big tech and governments to obfuscate and over-use broad data collection practices.

In recent years, awareness and technology have surfaced, bringing light to the gravity of the situation and providing options to overcome the current shortcomings of the world’s data privacy practices. Unfortunately, technology is simply a tool. If these new technologies are misused, we will find ourselves dealing with the same invasions on our personal Privacy–or worse–in the not too distant future.

Technology-based privacy concerns

Since the invention of the browser Cookie in 1994, countless concerns around consumer data collection have led to leaks, breaches, or misuses of that data. See below for a timeline of some of the significant events.

Instead of solving the underlying problem of data ownership, storage, and governance, companies and governments have relied almost exclusively on the archaic–albeit necessary–band-aid of using Terms of Service to describe how data is collected and used by particular web technologies.

Current technology provides enormous benefits to our everyday lives. Consider the benefits that Google Maps and Twitter offer us. Google Maps makes even the most complex types of navigation an after-thought, while Twitter provides unprecedented access to a global audience for any individual. Even though the benefits of these services are massive, they also come at a cost. As our technology becomes more powerful, it also becomes more invasive.

From 1999 to 2022, the length of Google’s Terms of Service increased by over 700%. There are many reasons for this, but the primary ones are: 1) The technology has become more complex and requires aggregating more data to accomplish its goals. 2) Growing legislation requires the information collected to be better outlined and more transparent. 3) It’s easier to throw lawyers and documentation at the problem in order to maintain the same business models (ad revenue) than to change the underlying technology and incentives fundamentally.

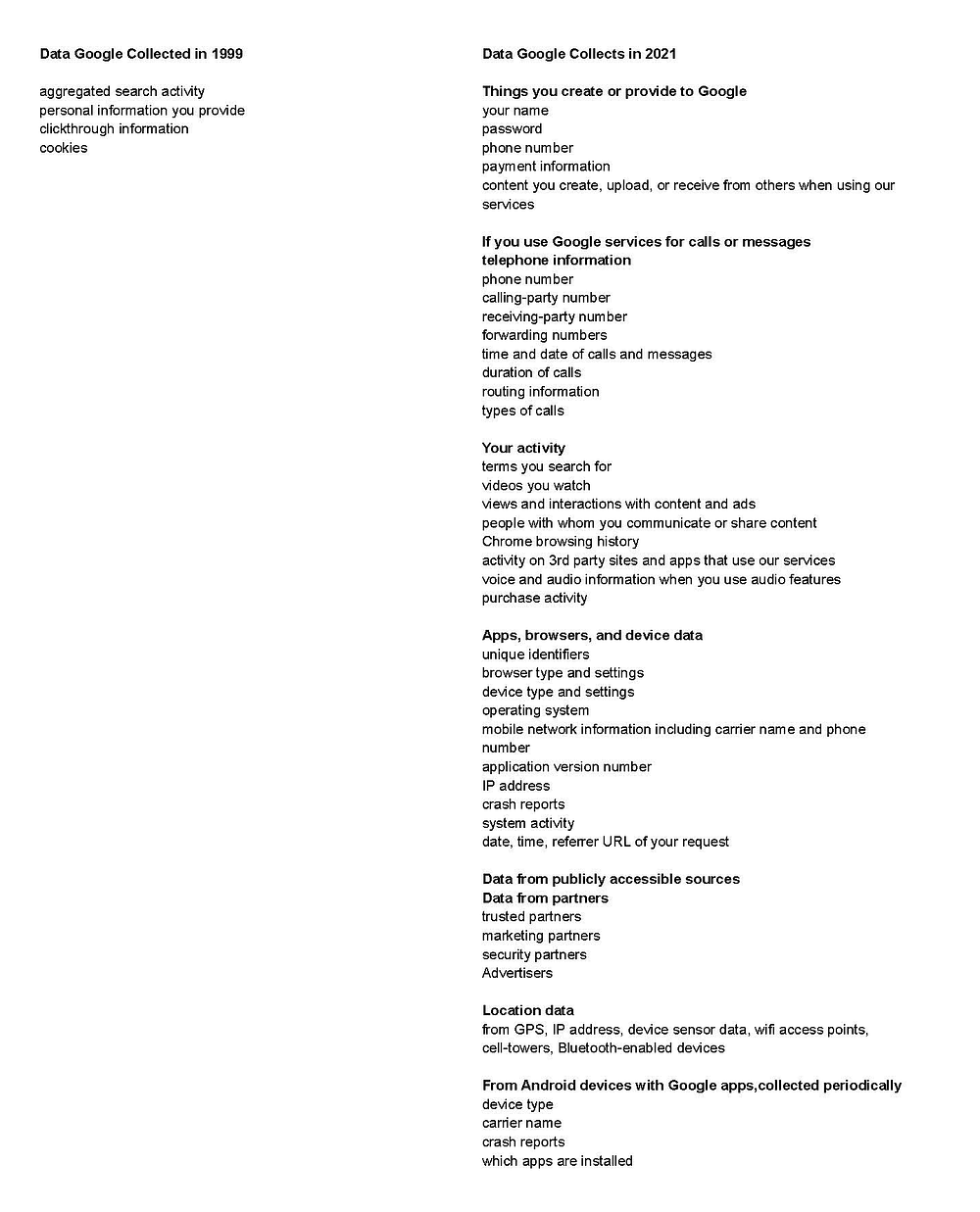

Here’s an example of the changes to the data Google collected in 1999 and 2021, respectively, taken directly from their privacy policy archives:

It’s not entirely transparent, but one could infer that many of the data leaks, instances of data misuse, and broad data collection practices can be chalked up to oversight, ignorance, or a lack of foresight during implementation. That said, companies prioritize profits, especially when required by shareholders. If they didn’t prioritize profits, they would be bad at being companies. We must maintain a constant push and pull of governance over technology companies when they overstep their bounds and freedom to innovate against outdated systems.

In addition to the right balance of governance, creating systems with privacy built-in as the default would help mitigate these issues entirely.

Solutions for data privacy

Over the years, momentum has built regarding awareness and new technology to improve data privacy on the Internet. Foundations such as the Electronic Frontier Foundation (EFF) and the Center for Humane Technology are fighting for internet privacy and consumer protections. Journalism coverage is steadily increasing as the general public becomes more aware of the issue. New legislation such as GDPR and CCPA requires companies to request permission from individuals to collect their data and provide transparency on the types of data collected. New products and services enable individuals to remove unwanted public data from the Internet, including DeleteMe and the Privacy Bee browser extension. Finally, new technologies are surfacing, which can fundamentally change the way data is used and stored for the better.

Web3

Legislation and documentation alone are not enough. Privacy must be inherent in the way the system functions. If appropriately used, Web3 has the potential to create the foundation required to not only add a monetization layer to the Internet but also a new data control layer. The combination of decentralized identifiers, decentralized data storage, encryption, and non-fungible tokens can enable a better internet in two primary ways.

Data self-sovereignty and security for individuals and consumers

If we can shift the current paradigm of storing data on company servers or cloud infrastructure owned by individual companies, to storing it on decentralized storage solutions (IPFS, Filecoin, Arweave, Sia, Ceramic Network), then the ownership of that data can move from companies to individuals. This new paradigm is not unlike the way ownership of money moves away from banks to individual wallets with cryptocurrency.

This shift will be mutually beneficial for all parties. It provides better security over the data (fewer data leaks in the headlines for companies), it moves ownership back to the true owner of the data (the user), and it significantly reduces costs for companies because they no longer need to have sole-ownership and responsibility over the storage of this data.

Data transparency and security for companies, governments, and organizations

The second way the Internet improves with Web3 is through data transparency for parties that should be held accountable for how the data is used (data audit trails). By shifting to decentralized data technologies, owners can better track their data. Additionally, programmatic controls can be built-in to provide access to anonymized information for KYC/AML purposes when necessary.

The challenges of Web3

Like any technology, a blockchain is simply a tool capable of being used in many ways. In the same way that Web3 can improve data privacy, it also has the potential to have the opposite effect. Most current blockchain implementations and decentralized storage solutions are public by default. With public blockchains, all transactions, interactions, and data stored are publicly available for anyone to view and track for eternity, a concerning notion for individuals and companies alike. There are good use cases for permanent public data, but it’s certainly not applicable to everything. Most people probably don’t want all of their bank transactions, investment portfolios, and social security numbers publicly listed on the Internet–we should expect the same should be true for Web3. Unfortunately, platforms like Chainalysis are already taking advantage of this to learn what data exists and whom it belongs to on most blockchains.

To overcome the privacy issues associated with public blockchains, technologies that contain encryption and permission control mechanisms inherently built-in are beginning to proliferate. Some examples of these technologies include zero-knowledge proofs, secure hardware enclaves, and encrypted distributed file storage solutions. Zero-knowledge proofs enable the ability to share what data is owned by an entity without revealing who the entity is. Secure hardware enclaves are capable of storing encrypted data on hardware devices without allowing parties that have access to the device itself obtain that information. Lastly, encrypted distributed file storage solutions are capable of storing data which only the owner can view publicly on the internet. Combining these tools provides the power to enable a more secure and self-sovereign internet; however, (you guessed it) it comes with some downsides.

The dichotomy of perfect encryption

One of the primary downsides of Privacy first technologies is the intrinsic downside of perfect encryption. Encryption is an all-or-nothing technology. Encryption secures everything we do on the Internet, including credit card payments, connections to our bank accounts, and private conversations with our friends and family. The trouble is, it also enables bad actors to perform nefarious activity in secret. Many have proposed the implementation of “backdoors” to encryption or completely outlawing encryption for public use to overcome this problem. Unfortunately, both options would do nothing but further empower bad actors. Amongst numerous other issues, back doors would eventually be discovered and exploited no matter how sophisticated they were, and banning encryption would remove critical protections from anyone who abided by the law.

After the 911 attacks in 2001, Paul Zimmermann, the individual who publicly released one of the first encryption tools to the Internet called Pretty Good Privacy (PGP), was informed that the terrorists likely used PGP to coordinate the attacks. His response was, “The intellectual side of me is satisfied with the decision, but the pain that we all feel because of all the deaths mixes with this,” he said. “It has been a horrific few days.”

Encryption is required for the Internet to function correctly, but it comes at a cost. The only path forward is to continue to improve the Internet and spend the necessary resources needed to mitigate nefarious use without encroaching on the protections it provides for everyone else.

Decentralization

Decentralized and fully-autonomous technologies can accomplish extraordinary tasks, but these technologies, having been produced by humans, are prone to errors and governance challenges. All software contains bugs, specifically nascent software, which has not stood the test of time to resolve imperfections. Bugs introduced to systems designed to be permanent and autonomous can be particularly detrimental. In addition, the original design may fundamentally be flawed or every potential future scenario not considered. Decentralized autonomous organization governance can help to resolve some of these issues (an in-depth topic for another time), but ultimately at the end of the day, we are still dealing with people-based problems that require thoughtful debate, collaboration, and iterative improvements.

Client-side encryption

Another difficult challenge to overcome is that publicly available encrypted data (such as the data produced by the technologies mentioned above) creates a honey pot. When valuable data or monetary value itself is open to the public in an encrypted form, it creates an incentive for hackers to break it and earn a payday. This directly translates to the requirement that these systems be capable of withstanding the world’s most sophisticated hackers and attacks. Client-side encryption is still possible due to the fact that unbreakable encryption does exist, but it requires perfect implementation. As previously mentioned, creating bug-free code can be particularly challenging for immature technologies.

The bottom line

Creating the perfect Internet, which enables self-sovereign data while simultaneously giving inventors the freedom to innovate, and companies/organizations the ability to provide quality services, will be hard… really hard, but it’s absolutely worth fighting for.

Technology is incredibly powerful and must be created with immense care. Builders are responsible for being mindful of the technology they develop and introduce to the world. For many, it’s easy to get wrapped up in building the “next big thing” without pausing to consider the impact it might have on society.

In the coming years, we’re going to have to work together relentlessly to create the future we all want to see. In the meantime, it probably makes sense to continue using ape JPEGs and 8bit RPG games to prove out the potential of these new technologies before we start taking ourselves too seriously.

A timeline of privacy concerns

*This timeline is not fully comprehensive.

1994 — Netscape: Invents the Cookie, which is ultimately exploited by advertisers shortly after to track users across the Internet.

1995 — European Data Protection Directive Is adopted: Following privacy concerns regarding the websites that started collecting customer data in the period of the first tech bubble, the European Union passed a directive governing the processing of personal data on October 24, 1995.

1997 — Electronic Privacy Information Center: EPIC reviews a hundred of the Internet’s most popular sites only to realize that only 17 of them have an explicitly stated privacy policy.

Out of the 100 websites they examine, 24 are found to use cookies, and none of them allow users to access their data. EPIC concludes that data privacy will be one of the biggest challenges for the Internet.

1999 — DoubleClick: A privacy scandal erupts when DoubleClick announces they want to deanonymize ads data, infringing on the privacy rights of millions of consumers whose behavior had been tracked.

2000 — Google Adwords Launches: It uses cookie technology, and keywords searched for by users to decide which ads will be displayed across their advertising affiliate network.

2000s — Patriot Act: The Patriot Act enables the NSA to monitor “the international telephone calls and international email messages of hundreds, perhaps thousands, of people inside the United States.”, amongst other tracking tools. Later, Snowden releases information on the vast expanse of NSA technology for data collection.

2004 — Hacking Awareness: 2004 sees rampant hacker attacks. Web software vulnerabilities are hacked to intercept sensitive data. Some of the methods used are Trojans, keystroke loggers, and malware.

2004 — Facebook Inception: Harvard administration charges Zuckerberg with breaching security, copyright violations, and privacy violations as he uploaded photos and information about Harvard students without their consent.

2006 — Facebook News Feed: A significant portion of Facebook’s more than 8 million users are not happy that every move of their personal lives is now being blasted into a daily feed for their friends.

2007 — Facebook Beacon: After multiple incidents with the Facebook Beacon feature, Facebook apologizes for releasing a feature that told people what their friends had bought.

207 — Google + DoubleClick: Google acquires double click for $3.1 Billion.

2007 — Google Cookies: The nonprofit group Public Information Research launched Google Watch raises questions relating to Google’s storage of cookies, which in 2007 had a life span of more than 32 years and incorporated a unique I.D. that enabled the creation of a user data log. Google shares this information with law enforcement and other government agencies upon receiving a request. The majority of these requests do not involve review or approval by any court or judge.

2008 — Facebook Social Login: Facebook launches “social login” — The “partnered” websites can access details about the users’ Facebook profiles, including their full name, photos, wall posts, and friend lists. Twitter, LinkedIn, and Google+ followed with their own social logins in 2009, 2010, and 2011, respectively.

2009 — Facebook Copyrights: Following public outrage, Facebook modifies its terms of use to offer more data rights to users (Facebook previously reserved the right to use

all the content uploaded to the site by users.)

2009 — Google Chinese Hack: In 2009, a group of hackers working for the Chinese government penetrated the servers of Google and other prominent American companies, such as Yahoo and DowChemical. In a January 2010 blog post, Google indicated that the goal of the attack seems to have been to dig up information on Chinese human rights activists.

2009 — Google Docs Leak: Google Docs had allowed unintended access to some private documents. 0.05% of all documents stored via the service were affected by the bug.

2010 — Google Street View: Czech Republic (and others) — The Office described Google’s program as taking pictures “beyond the extent of the ordinary sight from a street” and claimed that it “disproportionately invaded citizens’ privacy.”

2011 — Google Buzz v FTC: Google agrees to settle Federal Trade Commission charges

that it used deceptive tactics and violated its own privacy promises to consumers when it launched its social network Google Buzz in 2010.

2011 — Facebook FTC Charges: Facebook falsely claimed that third-party apps were able to access only the data they needed to operate. In fact, the apps could access nearly all of a user’s personal data.

2012 — Facebook + Instagram: Facebook acquires Instagram for $ 1 billion. The change in Terms of Use means all users agree by default to businesses paying Instagram to display their usernames, likeness, photos (with metadata), and behavior as a form of advertising.

2012 — Google Buzz v FTC Round 2: Google agrees to settle Federal Trade Commission charges that it used deceptive tactics and violated its own privacy promises to consumers when it launched its social network Google Buzz in 2010. Google had received thousands of complaints from users who were concerned about public disclosure of their email contacts which included, in some cases, ex-spouses, patients, students, employers, or competitors.

2012 — Google Data Expansion: Google announces it will integrate user data across its email, video, social networking, and other services. In a blog post, the company explains the plan will lead to “a beautifully simple, intuitive user experience across Google.”

2013 — Facebook Bug: A bug exposed the email addresses and phone numbers of 6 million Facebook users to anyone who had some connection to the person or knew at least one piece of their contact information.

2014 — Facebook Mood Manipulation: Facebook’s mood-manipulation experiment in 2014 included more than half a million randomly selected users. Facebook altered their news feeds to show more positive or negative posts. The purpose of the study was to show how emotions could spread on social media. The results were published in the Proceedings of the National Academy of Sciences, kicking off a firestorm of backlash over whether the study was ethical.

2014 — Google Gmail leak: While it wasn’t immediately clear how the information was obtained, in September 2014, almost 5 million Gmail addresses and passwords were published online.

2015 — Experian Hack: 15 million people who applied for Experian credit checks have their personal information exposed, including their names, addresses, social security, driver’s license, and passport numbers.

2017 — Equifax Hack: 145.5 Million customers. The stolen data includes social security, drivers license, names, date of birth, and addresses.

2015 — Facebook App Data: Facebook apps that can obtain all data of connected users are finally cut off, but Facebook can’t retroactively undo what data has already been released.

2015 — Google: In September 2015, Checkpoint researchers discovered that

an app called BrainTest was infecting Android devices with a pernicious, hard-to-remove malware. In this case, the app was listed on the Google Play Store. Through obfuscation techniques, these app developers were able to deceive Google Bouncer and land on Google’s app storefront.

2016 — Google Double Click Ads: Google dropped its ban on personally-identifiable info in its DoubleClick ad service. Google’s privacy policy was changed to state it “may” combine web-browsing records obtained through DoubleClick. This meant that Google could now, if it wished to, “build a complete portrait of a user by name, based on everything they write in email, every website they visit and the searches they conduct.”

2016 — Google Android Bug: In November 2016, cybersecurity company Checkpoint

discovered a malware called Gooligan that at the time was infecting 13,000 devices every day. This app appears to have penetrated devices through a combination of phishing and third-party app store downloads.

2018 — Facebook Privacy Scandals: More than 21 Facebook privacy scandals take place. The most public of these scandals reveal that Cambridge Analytica, a British political consulting firm collected data from millions of Facebook user profiles and used it for political advertising. It’s revealed that Facebook was aware of this data collection and did nothing about it, despite the fact that it violated the companies Terms of Use.

2018 — Facebook GDPR: Facebook is forced to comply with updated GDPR legislation; however, the updates to their application ultimately end up centering around minor user interface changes more than changes to their actual data collection practices.

2018 — Facebook Belgium: Facebook is ordered to delete all data collected illegally from Belgians, including those who aren’t Facebook users but may have still landed on a Facebook page or risk being fined up to 100 million euros.

2018 — Multiple breaches, Google+ Bug Exposes 52.5 Million Users’ Data. From 2015 until March 2018, the bug allowed third-party developers to access Google+ users’ private data.

2018 — Google Tracking: Google tracks location data on 2 billion users, sometimes without permission. Weather apps conducted online searches (including those that weren’t location-specific or location-dependent) and a variety of other tasks. For that, users had to turn off “web and app activity” tracking, even though that privacy section said nothing about location data. While not a breach, many considered it a significant privacy violation. In the end, up to 2 billion users may have been impacted.

2019 — Zoom Flaws: In an attempt to create a frictionless experience for Mac users, a feature in the video conferencing app Zoom causes a vulnerability that allows an attacker to access a user’s webcam feed without them knowing.

2019 — Google Child Privacy: Google receives a $170 Million fine for violating certain child privacy laws regarding the collection of data on children, the tech giant agrees to pay a $170 million fine.

2020 — Twitter “Opt-Out”: Twitter changes the way their privacy “Opt-Out” settings work, removing the ability for anyone outside of GDPR-compliant regions to opt-out of sharing data with Twitter’s business partners.

2020 — Twitter Data Misuse: Twitter confirms that it is under investigation by the U.S. Federal Trade Commission (FTC) for potentially misusing people’s personal information to serve ads, adding that it faced fines of $150 million to $250 million.

2020 — Google Misleading Users: Google was accused by an Australian watchdog of misleading Millions of Australian users about the use and collection of their private data. The watchdog alleges that starting in 2016, Google began combining Google account user information with activity from non-Google sites that relied on Google Technologies for the purpose of displaying ads.

2020 — Google Lawsuit: Google faces $5 billion lawsuit for tracking “private” browsing of millions of users, essentially covering anyone who used the incognito mode since June 1, 2016.

2020 — Covid Contact Tracing: Concerns begin over tracking applications created by Google and others overstepping their purpose of contact tracing.

2020 — Zoom: Instances of uninvited participants showing up in and detailing private Zoom conferences make headlines. Eric Yuan, Founder / CEO of Zoom, puts out a statement in response to privacy and safety concerns on the app.

Sources

https://www.npr.org/2013/06/11/190721205/privacy-in-retreat-a-timeline

https://get.theappreciationengine.com/2020/02/19/data-privacy-timeline/

http://libc.y-net.work/wtc/zimmermann.html

https://www.eff.org/nsa-spying/timeline

https://safecomputing.umich.edu/privacy/history-of-privacy-timeline

https://www.thevpnexperts.com/vpn/timeline-of-online-privacy/

https://techcrunch.com/2018/04/17/facebook-gdpr-changes/

https://www.nbcnews.com/tech/social-media/timeline-facebook-s-privacy-issues-its-responses-n859651

https://www.google.com/transparencyreport/userdatarequests

https://www.wsj.com/articles/noonan-what-we-lose-if-we-give-up-privacy-1376607191